- Home

- All updates

- EDGE Insights

- Industries

- Company Search

- My Watchlists (Beta)

All Updates

Cohere launches Embed V3 for enhanced semantic search in LLMs

Hexagon unveils Advanced Compensation for metal 3D printing

Eden AI raises EUR 3 million in seed funding to accelerate product development

Wiz acquires Dazz to expand cloud security remediation capabilities

Immutable partners with Altura to enhance Web3 game development and marketplace solutions

OneCell Diagnostics raises USD 16 million in Series A funding to enhance cancer diagnostics

BioLineRx and Ayrmid partner to license and commercialize APHEXDA across multiple indications

SOPHiA GENETICS announces global launch of MSK-IMPACT powered with SOPHiA DDM

Biofidelity launches Aspyre Clinical Test for lung cancer detection

Spendesk partners with Adyen to enhance SMB spend management with banking-as-a-service solution

Mews acquires Swedish RMS provider Atomize to enhance Hospitality Cloud platform

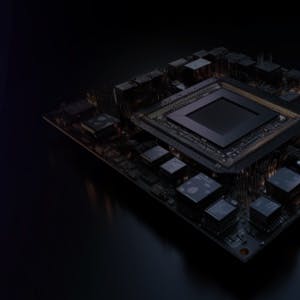

Generative AI Infrastructure

Cohere launches Embed V3 for enhanced semantic search in LLMs

-

Canadian AI startup Cohere has unveiled Embed V3, a new iteration of its embedding model. The model is designed for semantic search and applications that use large language models (LLMs).

-

Embed V3 transforms data into numerical representations, referred to as "embeddings.” Its primary features include advanced capabilities in matching documents to queries, increasing the efficiency of retrieval augmented generation, and reducing the operational costs of LLM applications.

-

It aims to solve some of the challenges of LLMs such as lack of access to updated information and generation of false data. Moreover, the model is compatible with vector compression methods, which can cut down the costs of running vector databases while maintaining high search quality.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.