- Home

- All updates

- EDGE Insights

- Industries

- Company Search

- My Watchlists (Beta)

All Updates

NVIDIA releases HDX H200 AI Chip with improved memory capacity and bandwidth

Hexagon unveils Advanced Compensation for metal 3D printing

Eden AI raises EUR 3 million in seed funding to accelerate product development

Wiz acquires Dazz to expand cloud security remediation capabilities

Immutable partners with Altura to enhance Web3 game development and marketplace solutions

OneCell Diagnostics raises USD 16 million in Series A funding to enhance cancer diagnostics

BioLineRx and Ayrmid partner to license and commercialize APHEXDA across multiple indications

SOPHiA GENETICS announces global launch of MSK-IMPACT powered with SOPHiA DDM

Biofidelity launches Aspyre Clinical Test for lung cancer detection

Spendesk partners with Adyen to enhance SMB spend management with banking-as-a-service solution

Mews acquires Swedish RMS provider Atomize to enhance Hospitality Cloud platform

Generative AI Infrastructure

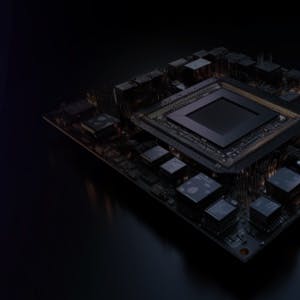

NVIDIA releases HDX H200 AI Chip with improved memory capacity and bandwidth

-

NVIDIA has unveiled the HGX H200, an AI chip, as an upgrade to the H100. The H200 claims 1.4x memory bandwidth and 1.8x memory capacity, enhancing its capability for intensive GenAI work. The first H200 chips are expected to be released in Q2 2024.

-

The H200 is a GPU designed for AI work with key improvements in memory, utilizing the new HBM3e memory spec for faster performance. Its memory bandwidth is increased to 4.8 TB per second and the total memory capacity is raised to 141 GB.

-

The H200 maintains compatibility with systems supporting H100s, and major cloud providers, like Amazon, Google, Microsoft, and Oracle, plan to offer these new GPUs.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.