- Home

- All updates

- EDGE Insights

- Industries

- Company Search

- My Watchlists (Beta)

All Updates

Expedera introduces Orgin NPU to provide power and performance to run LLMs on edge devices

EKORE raises EUR 1.3 million (~ USD 1 million) in seed funding to strengthen platform

Culina Health raises USD 7.9 million in Series A funding to expand offerings and expand team

ViGeneron receives IND clearance for VG801 gene therapy

Reflex Aerospace ships first commercial satellite SIGI

Vast partners with SpaceX for two private astronaut missions to ISS

Carbios appoints Philippe Pouletty as interim CEO amid plant delay

BlueQubit raises USD 10 million in seed funding to develop quantum platform

Arbor Biotechnologies receives FDA clearance for ABO-101 IND application

Personalis partners with Merck and Moderna for cancer therapy development and investment

COTA partners with Guardant Health to develop clinicogenomic data solutions for cancer research

Generative AI Infrastructure

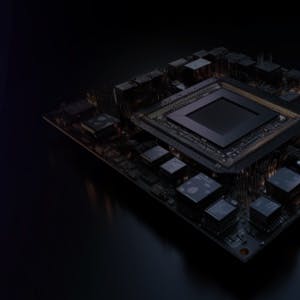

Expedera introduces Orgin NPU to provide power and performance to run LLMs on edge devices

-

Expedera, a provider of customizable Neural Processing Unit (NPU) semiconductor IP, introduced the Origin NPUs to support GenAI on edge devices. Origin NPUs offer native support for LLMs, including stable diffusion, unlocking advanced natural language processing capabilities.

-

Origin NPUs claim to deliver up to 128 TOPS per core with sustained utilization, averaging 80%, surpassing industry norms, and avoiding dark silicon waste.

-

The patented packet-based NPU architecture addresses issues like memory sharing, security, and area penalties faced by conventional AI accelerators, providing scalability from edge nodes to automobiles.

-

Expedera provides customizable neural engine semiconductor IP, which improves performance, power, and latency while reducing the cost and complexity in edge AI inference applications across a range of industries, including mobile, automotive, and entertainment.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.