- Home

- All updates

- EDGE Insights

- Industries

- Company Search

- My Watchlists (Beta)

All Updates

Groq joins NAIRR Pilot to offer access to LPU Inference Engine

EKORE raises EUR 1.3 million (~ USD 1 million) in seed funding to strengthen platform

Culina Health raises USD 7.9 million in Series A funding to expand offerings and expand team

ViGeneron receives IND clearance for VG801 gene therapy

Reflex Aerospace ships first commercial satellite SIGI

Vast partners with SpaceX for two private astronaut missions to ISS

Carbios appoints Philippe Pouletty as interim CEO amid plant delay

BlueQubit raises USD 10 million in seed funding to develop quantum platform

Arbor Biotechnologies receives FDA clearance for ABO-101 IND application

Personalis partners with Merck and Moderna for cancer therapy development and investment

COTA partners with Guardant Health to develop clinicogenomic data solutions for cancer research

Generative AI Infrastructure

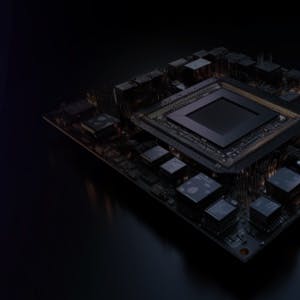

Groq joins NAIRR Pilot to offer access to LPU Inference Engine

-

Groq, an AI solutions company, has announced its participation in the National Artificial Intelligence Research Resource (NAIRR) Pilot. The program, under the US National Science Foundation, aims to provide AI research resources for US researchers and educators.

-

Through its participation, the company will be providing researchers with access to its LPU Inference Engine through GroqCloud. This engine is notable for its ability to deliver real-time AI inference. Groq's LPU Inference Engine offers a significant speed advantage while using considerably less energy compared to GPU-based systems for running AI inference tasks.

-

Researchers can also use Groq technology through the Argonne Leadership Compute Facility (ALCF), which features a GroqRack compute cluster. This cluster includes a network of nine GroqNode servers arranged in a rotating multi-node network topology.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.