- Home

- All updates

- EDGE Insights

- Industries

- Company Search (Beta)

All Updates

Qualcomm partners with Ampere Computing to provide AI inference solution for LLMs

Robinhood launches joint investment accounts

eToro partners with London Stock Exchange to expand UK stock offerings

StorMagic secures funding from Palatine Growth Credit Fund

Archera raises USD 17 million in Series B funding for product development and recruitment

Alto Neuroscience receives grant of USD 11.7 million to support Phase IIb clinical trials of ALTO-100

Quest Diagnostics and BD partner to develop flow cytometry-based companion diagnostics for cancer and other diseases

USPACE Technology Group Limited unveils commercial optical satellites and related aerospace products

Sweden issues study on Gripen fighter jet’s satellite launch capability

Terran Orbital receives certification for new manufacturing facility to begin production

Crisalion Mobility partners with Air Chateau for pre-order of eVTOL aircraft

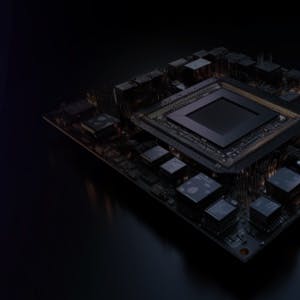

Generative AI Infrastructure

Qualcomm partners with Ampere Computing to provide AI inference solution for LLMs

-

Qualcomm has partnered with Ampere Computing, a chip designing company backed by Oracle, to create an AI inference solution for LLMs.

-

Ampere and Qualcomm's joint solution consists of a Supermicro server fitted with Ampere CPUs and Qualcomm's Cloud AI 100 accelerator chips. This combined framework aims to deliver an easily deployable system for efficient inference computing suitable for handling varying sizes. This is expected to meet the computational needs of businesses dealing with immense parameter models. Additionally, a 256-core server CPU is planned to be released in 2025.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.