- Home

- All updates

- EDGE Insights

- Industries

- Company Search (Beta)

All Updates

Google DeepMind introduces Frontier Safety Framework for future AI risks

Robinhood launches joint investment accounts

eToro partners with London Stock Exchange to expand UK stock offerings

StorMagic secures funding from Palatine Growth Credit Fund

Archera raises USD 17 million in Series B funding for product development and recruitment

Alto Neuroscience receives grant of USD 11.7 million to support Phase IIb clinical trials of ALTO-100

Quest Diagnostics and BD partner to develop flow cytometry-based companion diagnostics for cancer and other diseases

USPACE Technology Group Limited unveils commercial optical satellites and related aerospace products

Sweden issues study on Gripen fighter jet’s satellite launch capability

Terran Orbital receives certification for new manufacturing facility to begin production

Crisalion Mobility partners with Air Chateau for pre-order of eVTOL aircraft

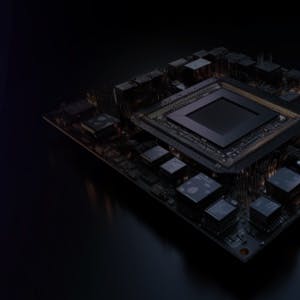

Generative AI Infrastructure

Google DeepMind introduces Frontier Safety Framework for future AI risks

-

Google DeepMind has introduced a new initiative known as the Frontier Safety Framework, designed to proactively identify and mitigate potential high-level risks associated with future AI capabilities. The initial framework is expected to be implemented in early 2025.

-

This framework highlights several important aspects: It examines the risks associated with advanced AI models, emphasizes severe threats like strong autonomous decision-making or cyber abilities, complements the company's other research and safety measures, and can be adjusted based on new insights and collaborations.

-

Google DeepMind claims that the major advantage of using the Frontier Safety Framework is its ability to identify and mitigate potential advanced AI risks ahead of time. The company expects that while the identified risks are currently beyond the capabilities of existing models, the Framework will help in preparing to address them effectively as and when they become relevant.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.