- Home

- All updates

- EDGE Insights

- Industries

- Company Search

- My Watchlists (Beta)

All Updates

Microsoft launches MInference for faster LLM processing

Qualcomm and Google partner to develop AI-driven automotive solutions

Meta AI releases LayerSkip to accelerate inference in LLMs

Freeform secures funding from NVIDIA's NVentures

Flexxbotics announces compatibility with LMI Technologies for quality inspection

Oxla raises USD 11 million in seed funding to drive commercialization

Cohesity enhances Gaia, its AI assistant, with visual data exploration and expanded data sources

Finzly launches FedNow service through BankOS platform in AWS marketplace

Runway launches Act-One for AI facial expression motion capture

Ideogram launches Canvas for image manipulation and generation

UiPath partners with Inflection AI to integrate AI solutions for enterprises

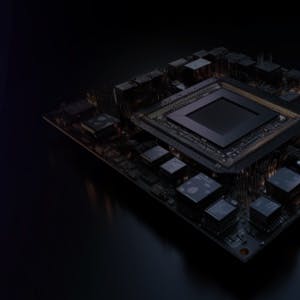

Generative AI Infrastructure

Microsoft launches MInference for faster LLM processing

-

Microsoft has introduced “MInference” on the AI platform Hugging Face, which aims to reduce the time taken to process large volumes of text inputs in AI systems.

-

MInference, or "Million-Tokens Prompt Inference," is built to speed up the "pre-filling" part of language model processing, a segment that usually slows down when working with long text inputs. Its main characteristics include the ability to reduce processing time by up to 90% for inputs equal to 700 pages of text while maintaining accuracy and a hands-on demo for developers and researchers to test its capabilities.

-

Microsoft's MInference technology enhances AI processing speed and efficiency by selectively processing parts of a text, which helps reduce computational resources and potential biases in information retention. This approach aims to make AI more energy-efficient, addressing environmental concerns associated with large-scale AI systems.

Contact us

By using this site, you agree to allow SPEEDA Edge and our partners to use cookies for analytics and personalization. Visit our privacy policy for more information about our data collection practices.